Problem

Environment Information:

vCenter: 4.1.0, Build 345043

ESXi: 4.1, Build 348481

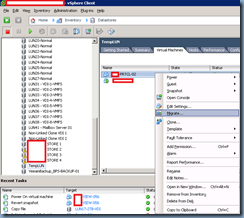

You need to move a virtual machine that is a part of a Microsoft Clustering Services (MSCS) to a new datastore and since this virtual machine has RDM (Raw Device Mapping) hard disks, you shutdown the virtual machine and use the Migrate… option to move the files:

Although the migration successfully completes without errors, you notice that once you attempt to power on the virtual machine:

… you receive the following error:

Reason: Thin/TBZ disks cannot be opened in multiwriter mode..

Cannot open the disk '/vmfs/volumes/50f88947-7d389596-e168-0025b500001a/Some-Cluster-02/Some-Cluster-02_1.vmdk' or one of the snapshot disks it depends on.

VMware ESX cannot open the virtual disk "/vmfs/volumes/50f88947-7d389596-e168-0025b500001a/Some-Cluster-02/Some-Cluster-02_1.vmdk" for clustering. Verify that the virtual disk was created using the thick option.

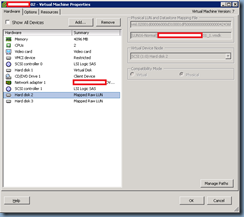

What’s strange is that when you view the configuration of the virtual machine, you see that the RDM drives have now become Virtual Disks:

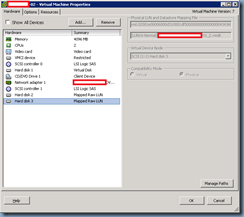

This is different than the original configuration prior to migrating this virtual machine as shown here

Solution

**Note that the demonstration in this post is for the virtual machine cluster node 2 that originally references cluster node 1 for the RDM files.

I’m not sure if this is a bug but the migration option appears to replace RDM mappings with actual virtual desktops that are not accessible. To correct this problem, begin by removing the bad disks from the virtual machine:

**Note that you can delete the disks with the “Remove from virtual machine and delete files from disk.” but if you want to be absolutely safe, proceed with just removing and not deleting the actual files then perform a post cleanup task when the VM is back up.

With the bad hard disks removed, proceed with re-adding the RDM drives back to the virtual machine:

Browse to the cluster node 1 and add each of the RDM disks back to node 2:

Remember to add the cluster shared disks on different SCSI controllers than the system drive:

Also remember to change the SCSI Bus Sharing setting from None to Physical:

Once the RDM disks have been properly added back, you should now be able to power on the virtual machine:

![clip_image002[4] clip_image002[4]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEh62m8RemJkGmV6LSUyi5SFTrUvKuExt4qLBx2eWFe6rMTKw24RCBDmLGW6OrgDjdE0tjnjfsrA_sY12DJrO4pD_yEB9VLjHEaB4Za3ZFuUet3YyYv_0BnNrWZPWyWQtmDlOXrXwxHuyf82/?imgmax=800)

![clip_image002[6] clip_image002[6]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEg6QApGOcWFRXW6_obF_7q5Iy52KRMB95lNVDcwBySIQw9UhfoYa6n2Dtbe1wrCPmOo34YW_GZtsjfot2Xge_hdjMqHwfj69vCiMofbXvVc6YVz6xoDfXRZcgLQ3NjP4-7Rv70cQzziD4_Z/?imgmax=800)

![clip_image002[8] clip_image002[8]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEhYpyohG6t6yWkeHoB8mREKT1Hyst9NwgPHfysHNmgB32UKWr8RXg0sTR4VBxUo1-8vIl2NR6l3wjlQeIrEY8jnxJTJTps6uU8V8AUbnj8M_aS4kIsOeGOyBrxoLHmTrObvwVmy_8Ft5KZL/?imgmax=800)

![clip_image002[10] clip_image002[10]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEiucIIwdLPke2rVK7H1endHYCaOH52pjFVl6GdsiMSOd2DWtDjwKJHi0C3KqvqrjGL8KKOyTZjGK3OKAqOjSYalooaRtqPZPdvyvSzfztTeCIvhRKKm-dYRJY8kPEpgenTSYYcYxr0okh7t/?imgmax=800)

![clip_image002[12] clip_image002[12]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEiVFvdZGwg1w05XxnsliZJ06ZGR4R_9PTuTrx6fRoOlCAGnqmFLi1ZEsUAvKY-5ZNnvvWNb0CJUjqitK_B3clkeSiIlpnp0Tmr5_4401V0uET-fH1bpdhFLN6Fx5-_Zm5IFtEMBDW2-Q_7_/?imgmax=800)

![clip_image002[14] clip_image002[14]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEjI7GWVfexwF-IwEHn2qcgr2OzaPcGOtUqW78_ZHS9n4xOILU-hPaiqBUjeTIwKMCDYXXljHamp_yhF67fV10mY7ZicxofHogoxV4swyptqbe7wMU5UMUZqe9Jo_P6BAk0Y338RhypXnUZA/?imgmax=800)

![clip_image002[16] clip_image002[16]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEj0RfYfH5DlvfNunwxQ7-1keU7OcegqOQyEvTiwhDiXCu9oDgZdPhpGyFkNJlFLEF2urndXkwyhcehXD8hxwnGOyluKYmh5LLWe64-e_Xmud2NK4fZmna9X5IYSRCAr87KalViUbGNDA1SF/?imgmax=800)

![clip_image002[18] clip_image002[18]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEhoiaaG5eq23EC-dbC9PRBe4CatjmE0AwVSvA_Amgl78xD1cWrWDZF7FXU1BurFbRKS14TLconR3MwrWGX8QcJimVf_XhoNUQg_NoZGVcj7pOnV18eziDpbbT8ZJZFWt_0RJOJnyp5I0l_m/?imgmax=800)

No comments:

Post a Comment