Being fresh on the Bermuda island and spending most of my time trying to get settled in, I was starting to itch for some work that excited me and while I have yet to be assigned to a large project, I did end up getting assigned to a client who was experiencing sluggish performance on their vSphere environment. When I arrived on site, the first thing I did was have a look at the infrastructure they had:

Server: HP DL360 G7

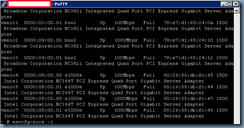

NIC: Onboard 4 port Broadcom NC382i Integrated Quad Port PCI Express Gigabit Server Adapter

NIC: Intel NC364T PCI Express Quad Port Gigabit Server Adapter

Host: ESXi 4.1 (Build: 260247)

… and then subsequently logged into their vCenter with the vSphere client. As I browsed through the network configuration, I immediately noticed that out of the 8 ports they had spread across the 2 network cards, some were connected at 100Mbps and some were connected at 1Gbps.

I personally didn’t feel that the vmnic setup was done in the best way possible but that wasn’t the priority because there was obviously something wrong with connected speeds of the vmnics. What made it even worse was that one of the vSwitches configured for storage was connected at 100Mbps. Since all of the ports are 1Gbps, the first thought I had was that either:

1. The vmnics were hard coded at 100Mbps.

2. The ports on the physical Cisco 3750 switches configured in a stack were hard coded at 100Mbps.

As I reviewed the settings in ESXi and asked one of our network engineers to look at the port settings on physical switches, we found that certain ports were on auto negotiate and certain ports were hard coded for 100Mbps. Further review on all the ports on multiple switches and all the ports on all of the ESXi hosts, we noticed that there was no pattern consistency in the configuration.

From here on, I asked the network engineer to simply hard code the ports at 1Gbps while I do the same on my end but once we did that, the vmnic connection would go down. We then tried auto negotiation on both sides and the same would happen. We continued to mix and match the settings on both ends and sometimes it would connect at 100Mbps and sometimes it would connect at 10Mbps. There was no consistency in the connection settings because it may connect at 1000Mbps but as soon as I shut down the port and bring it back up, it would connect at a lower speed.

After plowing through all the configuration we can think of and making sure the ports were configured as per VMware’s KB and not going anywhere, we called it a day and I decided to post on the VMware forums. The post can be found here: http://communities.vmware.com/thread/309372

… the person that respond ended up giving us an idea to look at and it was the cables. I faintly remember asking who did the cables and as it turns out, of all the cables connected to multiple patch panels, there was one section that was crimped by the client themselves. Since we were out of options, I went ahead to ask the client to order a professional manufactured cable that was long enough to run from the server over to the Cisco 3750 switches. It took a few days to get there but when we finally go them, we went back in to test and long behold the NICs began connecting at 1Gbps almost immediately.

I’d have to say that this is probably the first time I’ve ever come across such a problem but it was well worth the frustration while troubleshooting. This also reminds me of a time when my practice lead from my previous employer told me that there was this one project where he saw a 1Gbps NIC connect at 100Mbps half duplex and that was because someone managed to use an RJ-11 for connection.

The cables have since all been pulled:

… and replaced by certified cables:

Hope this helps anyone that may possibly run into this issue in the future.

2 comments:

Holy S*1t, hours of frustration, I tried a different cable and it worked! unbelievable, cheers for this!

If spanning-tree portfast or spanning-tree portfast trunk is set on the Cisco side and it takes more than a couple of seconds to connect, it's almost always a cable issue.

I recommend setting the duplex/speed to auto/auto. there are very few instances when you you need to hard set duplex or speed.

Post a Comment